FAIR and interactive data graphics from a scientific knowledge graph

Contents

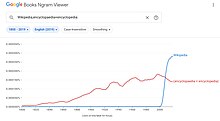

The Google Books Ngram Viewer is an online search engine that charts the frequencies of any set of search strings using a yearly count of n-grams found in printed sources published between 1500 and 2022[1][2][3][4] in Google's text corpora in English, Chinese (simplified), French, German, Hebrew, Italian, Russian, or Spanish.[1][2][5] There are also some specialized English corpora, such as American English, British English, and English Fiction.[6]

The program can search for a word or a phrase, including misspellings or gibberish.[5] The n-grams are matched with the text within the selected corpus, and if found in 40 or more books, are then displayed as a graph.[6] The Google Books Ngram Viewer supports searches for parts of speech and wildcards.[6] It is routinely used in research.[7][8]

History

In the development processes, Google teamed up with two Harvard researchers, Jean-Baptiste Michel and Erez Lieberman Aiden, and quietly released the program on December 16, 2010.[2][9] Before the release, it was difficult to quantify the rate of linguistic change because of the absence of a database that was designed for this purpose, said Steven Pinker,[10] a well-known linguist who was one of the co-authors of the Science paper published on the same day.[1] The Google Books Ngram Viewer was developed in the hope of opening a new window to quantitative research in the humanities field, and the database contained 500 billion words from 5.2 million books publicly available from the very beginning.[2][3][9]

The intended audience was scholarly, but the Google Books Ngram Viewer made it possible for anyone with a computer to see a graph that represents the diachronic change of the use of words and phrases with ease. Lieberman said in response to the New York Times that the developers aimed to provide even children with the ability to browse cultural trends throughout history.[9] In the Science paper, Lieberman and his collaborators called the method of high-volume data analysis in digitalized texts "culturomics".[1][9]

Usage

Commas delimit user-entered search terms, where each comma-separated term is searched in the database as an n-gram (for example, "nursery school" is a 2-gram or bigram).[6] The Ngram Viewer then returns a plotted line chart. Note that due to limitations on the size of the Ngram database, only matches found in at least 40 books are indexed.[6]

Limitations

The data sets of the Ngram Viewer have been criticized for their reliance upon inaccurate optical character recognition (OCR) and for including large numbers of incorrectly dated and categorized texts.[11] Because of these errors, and because they are uncontrolled for bias[12] (such as the increasing amount of scientific literature, which causes other terms to appear to decline in popularity), care must be taken in using the corpora to study language or test theories.[13] Furthermore, the data sets may not reflect general linguistic or cultural change and can only hint at such an effect because they do not involve any metadata like date published,[dubious – discuss] author, length, or genre, to avoid any potential copyright infringements.[14]

Systemic errors like the confusion of s and f in pre-19th century texts (due to the use of ſ, the long s, which is similar in appearance to f) can cause systemic bias.[13] Although the Google Books team claims that the results are reliable from 1800 onwards, poor OCR and insufficient data mean that frequencies given for languages such as Chinese may only be accurate from 1970 onward, with earlier parts of the corpus showing no results at all for common terms, and data for some years containing more than 50% noise.[15][16][better source needed]

Guidelines for doing research with data from Google Ngram have been proposed that try to address some of the issues discussed above.[17]

See also

References

- ^ a b c d Michael, Jean-Baptiste; Shen, Yuan K.; Aiden, Aviva P.; Veres, Adrian; Gray, Matthew K.; The Google Books Team; Pickett, Joseph P.; Hoiberg, Dale; Clancy, Dan; Norvig, Peter; Orwant, Jon; Pinker, Steven; Nowak, Martin A.; Aiden, Erez L. (2010). "Quantitative Analysis of Culture Using Millions of Digitized Books". Science. 331 (6014): 176–182. doi:10.1126/science.1199644. PMC 3279742. PMID 21163965.

- ^ a b c d Bosker, Bianca (2010-12-17). "Google Ngram Database Tracks Popularity Of 500 Billion Words". The Huffington Post. Retrieved 2012-05-31.

- ^ a b Lance Whitney (2010-12-17). "Google's Ngram Viewer: A time machine for wordplay". Cnet.com. Archived from the original on 2014-01-23. Retrieved 2012-05-31.

- ^ @searchliaison (July 13, 2020). "The Google Books Ngram Viewer has now been updated with fresh data through 2019" (Tweet). Retrieved 2020-08-11 – via Twitter.

- ^ a b "Google Books Ngram Viewer - University at Buffalo Libraries". Lib.Buffalo.edu. 2011-08-22. Archived from the original on 2013-07-02. Retrieved 2012-05-31.

- ^ a b c d e "Google Books Ngram Viewer - Information". Retrieved 2024-06-01.

- ^ Greenfield, Patricia M. (2013). "The Changing Psychology of Culture From 1800 Through 2000". Psychological Science. 24 (9): 1722–1731. doi:10.1177/0956797613479387. ISSN 0956-7976. PMID 23925305. S2CID 6123553.

- ^ Younes, Nadja; Reips, Ulf-Dietrich (2018). "The changing psychology of culture in German-speaking countries: A Google Ngram study". International Journal of Psychology. 53: 53–62. doi:10.1002/ijop.12428. PMID 28474338. S2CID 7440938.

- ^ a b c d "In 500 Billion Words, New Window on Culture". The New York Times. 2010-12-16. Retrieved 2024-06-01.

- ^ "Steven Pinker – The Stuff of Thought: Language as a window into human nature". Royal Society of Arts. 2010-02-04. Retrieved 2024-06-02 – via YouTube.

- ^ Nunberg, Geoff (2010-12-16). "Humanities research with the Google Books corpus". Archived from the original on 2016-03-10. Retrieved 2015-04-19.

- ^ Pechenick, Eitan Adam; Danforth, Christopher M.; Dodds, Peter Sheridan; Barrat, Alain (2015-10-07). "Characterizing the Google Books Corpus: Strong Limits to Inferences of Socio-Cultural and Linguistic Evolution". PLOS One. 10 (10): e0137041. arXiv:1501.00960. Bibcode:2015PLoSO..1037041P. doi:10.1371/journal.pone.0137041. PMC 4596490. PMID 26445406.

- ^ a b Zhang, Sarah. "The Pitfalls of Using Google Ngram to Study Language". WIRED. Retrieved 2017-05-24.

- ^ Koplenig, Alexander (2015-09-02). "The impact of lacking metadata for the measurement of cultural and linguistic change using the Google Ngram data sets—Reconstructing the composition of the German corpus in times of WWII". Digital Scholarship in the Humanities. 32 (1). Oxford Academic (published 2017-04-01): 169–188. doi:10.1093/llc/fqv037. ISSN 2055-7671.

- ^ "Google n-grams and pre-modern Chinese". digitalsinology.org. Retrieved 2015-04-19.

- ^ "When n-grams go bad". digitalsinology.org. Retrieved 2015-04-19.

- ^ Younes, Nadja; Reips, Ulf-Dietrich (2019-03-22). "Guideline for improving the reliability of Google Ngram studies: Evidence from religious terms". PLOS One. 14 (3): e0213554. Bibcode:2019PLoSO..1413554Y. doi:10.1371/journal.pone.0213554. ISSN 1932-6203. PMC 6430395. PMID 30901329.

Bibliography

- Lin, Yuri; et al. (July 2012). "Syntactic Annotations for the Google Books Ngram Corpus" (PDF). Proceedings of the 50th Annual Meeting. Demo Papers. 2. Jeju, Republic of Korea: Association for Computational Linguistics: 169–174. 2390499.

Whitepaper presenting the 2012 edition of the Google Books Ngram Corpus