As AI drives renewed interest in historical lab data, pharma R&D teams face new challenges around long-term storage, retrieval, and version control. A robust Lab Data Management System provides the structure to preserve data integrity, traceability, and institutional knowledge across projects, teams, and decades of research.

[Read More]

ISO/IEC 17025:2025 published on September 27, 2025, and ILAC set the transition deadline at September 30, 2028. Three years sounds generous, but a credible transition requires gap assessment, system selection, validation, migration, parallel run, retraining, and reassessment in sequence. Labs that start in month 30 will not finish in time.

[Read More]

When a global specialty chemicals manufacturer acquired two Canadian sites, the legacy SampleManager LIMS needed a full upgrade and integration with the parent ecosystem. CSols led the migration to v21.1, switched the backend from Oracle to SQL Server, moved the platform to Azure, and delivered a new SM-IDI/SAP interface on time and within budget.

[Read More]

The next generation of scientific discovery will be defined not by which labs adopted AI, but by which ones made it work inside the workflow. Success hinges on whether the underlying informatics platform embeds AI natively or bolts it on as a parallel process. A unified data model, not the AI model itself, is what determines practical value at scale.

[Read More]

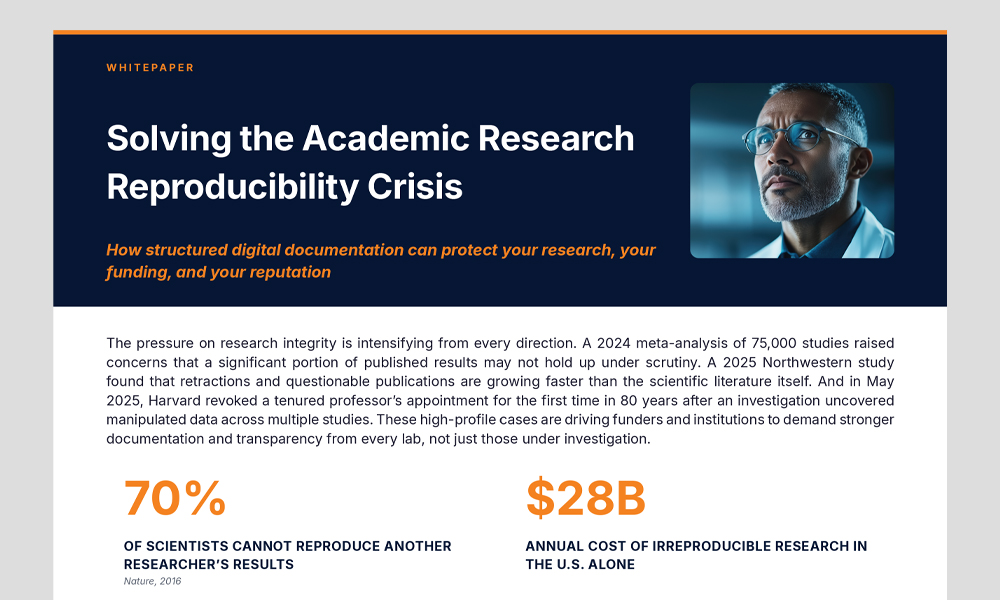

Over 70% of scientists can't reproduce another researcher's results. The cost: $28 billion a year in wasted research. This whitepaper reveals why the crisis is fundamentally a documentation problem, what NIH and institutions are now mandating, and how an electronic lab notebook eliminates the root causes. Includes a free ELN option for academic labs.

[Read More]

If vendors already validate their platforms, and not every feature carries equal risk, why do labs still spend months retesting everything? The shift from Computer System Validation (CSV) to Computer Software Assurance (CSA) promises a smarter, risk-based approach, but only works when labs have the change visibility to define impact with confidence.

[Read More]

Semaphore is now Labbit. After more than a decade and 400,000 hours working inside complex regulated labs, the team has unified under one name and one mission: a modern LIMS designed around how labs actually operate. Learn why the rebrand reflects a platform built for flexible workflows, FAIR data, and the AI transformation reshaping lab informatics.

[Read More]

Lab AI adoption is moving through three stages: passive ELNs that record work without supporting it, shadow labs where scientists lean on public generative AI and fragment the record, and active labs where governed intelligence lives inside the notebook itself. See the full maturity model and a practical roadmap to get from one to the next.

[Read More]

Stop waiting for perfect data to transform your research. In this episode of Decoding the Digital Lab, Rob Brown of Sapio Sciences pulls back the curtain on how leading labs are moving past AI hype and into real results, including 90% fewer physical compounds and 3x faster discovery phases. Tune in to bridge the gap between AI strategy and lab reality.

[Read More]

Modern labs do not just need more throughput, they need a structured, reliable data foundation behind every scientific decision. See how LabWare redefines LIMS as a strategic asset through configurable workflows, standardized data models, automated instrument capture, and built-in governance that keeps data trustworthy and analysis-ready.

[Read More]

A GxP-validated LIMS is not enough on its own. Auditors scrutinize how labs calibrate equipment, validate methods, investigate deviations, approve changes, and trace reagents end to end, and many of those workflows still live in paper, spreadsheets, and email. With FDA 21 CFR 211.68(b) citations up 55% since 2022, here is how to close the gap.

[Read More]

Under ICH E6(R3), the sponsor owns the integrity of all data, including vendor data, and misalignment can mean fines, production halts, or rejection of clinical data. This white paper is a strategic roadmap for moving your QMS and MES from one-size-fits-all validation to the risk-based, proportional model now expected by the FDA and Health Canada.

[Read More]

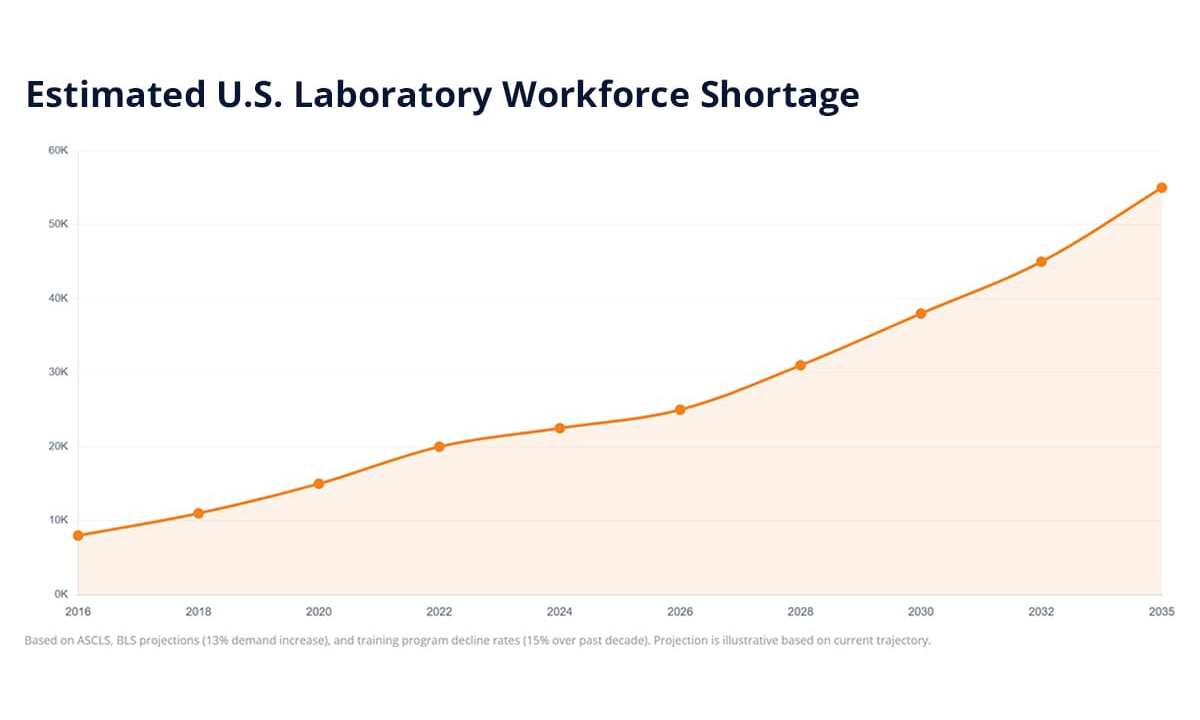

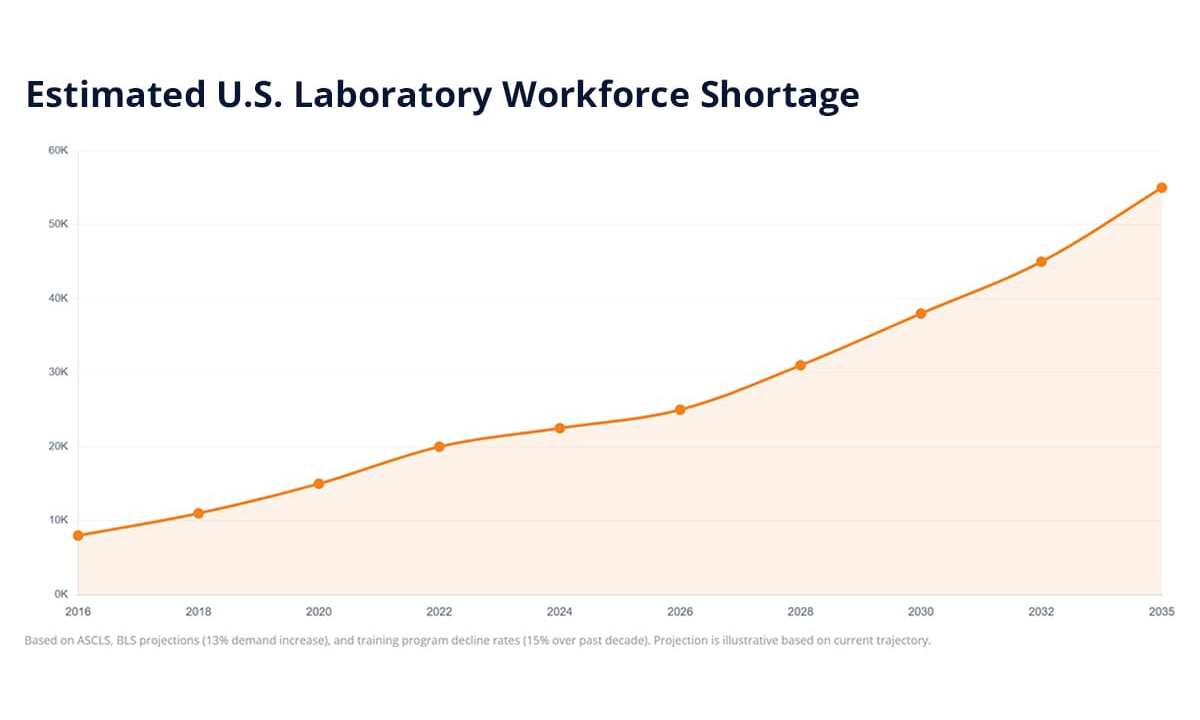

With an estimated 20,000 to 25,000 unfilled laboratory positions across the U.S. and Canada, the staffing crisis is structural, not temporary. The smartest labs in 2026 are shifting their strategy from hiring alone to automating manual workflows, digitizing institutional knowledge, and using LIMS and ELN tools to recover 20-30% of operational capacity.

[Read More]

Kalleid will present at Certainty US 2026 on April 14-15 in Boston, MA. Principal Scientific Application Manager Jonathan Buttrick will discuss improving data quality after registration system migrations, sharing lessons from migrating multiple regional systems into one global Chemaxon Compound Registration deployment at Takeda Pharmaceuticals.

[Read More]

The LabLynx ELN Suite gives academic research groups a full-featured electronic lab notebook at no cost with unlimited users. Whether your team works in life sciences, engineering, environmental research, or teaching labs, the Community Edition is built to support your research and your students through a true academic partnership, not a vendor relationship.

[Read More]

Setting up a new lab? The digital foundation matters as much as the physical one. This article explores how implementing LIMS, ELN, and SDMS from day one helps map research workflows, embed data integrity through ALCOA+ principles, build in regulatory compliance, and enable end-to-end traceability through system integration.

[Read More]

Nearly all lab professionals (97%) now use some form of AI, and 77% turn to public tools like ChatGPT alongside their ELN. The problem? 45% do so through personal accounts, pulling scientific reasoning outside governed workflows. This article explores why labs need context-aware AI inside the notebook, not a crackdown.

[Read More]

Traditional publishing pushes static content to users, but CaaS flips the model by pulling and assembling content on demand. By linking repositories through APIs and delivering only what's relevant, CaaS enables omni-channel customization, reduces storage burden, and accelerates delivery. Learn how CaaS is reshaping technical communications.

[Read More]

Semaphore Solutions has officially rebranded as Labbit. After a decade of helping laboratories tackle complex informatics challenges, spanning hundreds of implementations and over 300,000 hours of work across clinical diagnostics, genomics, and GMP manufacturing, the company has shifted its full focus to the Labbit modern LIMS platform.

[Read More]

New research from Sapio Sciences reveals that 65% of lab professionals report repeating experiments because prior results are too hard to find or reuse. While 81% say their ELN records data effectively, it falls short on interpretation. The findings highlight a growing need for next-generation notebooks that support reuse, context, and decision-making.

[Read More]

As AI drives renewed interest in historical lab data, pharma R&D teams face new challenges around long-term storage, retrieval, and version control. A robust Lab Data Management System provides the structure to preserve data integrity, traceability, and institutional knowledge across projects, teams, and decades of research.[Read More]

As AI drives renewed interest in historical lab data, pharma R&D teams face new challenges around long-term storage, retrieval, and version control. A robust Lab Data Management System provides the structure to preserve data integrity, traceability, and institutional knowledge across projects, teams, and decades of research.[Read More]

ISO/IEC 17025:2025 published on September 27, 2025, and ILAC set the transition deadline at September 30, 2028. Three years sounds generous, but a credible transition requires gap assessment, system selection, validation, migration, parallel run, retraining, and reassessment in sequence. Labs that start in month 30 will not finish in time.[Read More]

ISO/IEC 17025:2025 published on September 27, 2025, and ILAC set the transition deadline at September 30, 2028. Three years sounds generous, but a credible transition requires gap assessment, system selection, validation, migration, parallel run, retraining, and reassessment in sequence. Labs that start in month 30 will not finish in time.[Read More]

When a global specialty chemicals manufacturer acquired two Canadian sites, the legacy SampleManager LIMS needed a full upgrade and integration with the parent ecosystem. CSols led the migration to v21.1, switched the backend from Oracle to SQL Server, moved the platform to Azure, and delivered a new SM-IDI/SAP interface on time and within budget.[Read More]

When a global specialty chemicals manufacturer acquired two Canadian sites, the legacy SampleManager LIMS needed a full upgrade and integration with the parent ecosystem. CSols led the migration to v21.1, switched the backend from Oracle to SQL Server, moved the platform to Azure, and delivered a new SM-IDI/SAP interface on time and within budget.[Read More]

The next generation of scientific discovery will be defined not by which labs adopted AI, but by which ones made it work inside the workflow. Success hinges on whether the underlying informatics platform embeds AI natively or bolts it on as a parallel process. A unified data model, not the AI model itself, is what determines practical value at scale.[Read More]

The next generation of scientific discovery will be defined not by which labs adopted AI, but by which ones made it work inside the workflow. Success hinges on whether the underlying informatics platform embeds AI natively or bolts it on as a parallel process. A unified data model, not the AI model itself, is what determines practical value at scale.[Read More]

Over 70% of scientists can't reproduce another researcher's results. The cost: $28 billion a year in wasted research. This whitepaper reveals why the crisis is fundamentally a documentation problem, what NIH and institutions are now mandating, and how an electronic lab notebook eliminates the root causes. Includes a free ELN option for academic labs.[Read More]

Over 70% of scientists can't reproduce another researcher's results. The cost: $28 billion a year in wasted research. This whitepaper reveals why the crisis is fundamentally a documentation problem, what NIH and institutions are now mandating, and how an electronic lab notebook eliminates the root causes. Includes a free ELN option for academic labs.[Read More]

If vendors already validate their platforms, and not every feature carries equal risk, why do labs still spend months retesting everything? The shift from Computer System Validation (CSV) to Computer Software Assurance (CSA) promises a smarter, risk-based approach, but only works when labs have the change visibility to define impact with confidence.[Read More]

If vendors already validate their platforms, and not every feature carries equal risk, why do labs still spend months retesting everything? The shift from Computer System Validation (CSV) to Computer Software Assurance (CSA) promises a smarter, risk-based approach, but only works when labs have the change visibility to define impact with confidence.[Read More]

Semaphore is now Labbit. After more than a decade and 400,000 hours working inside complex regulated labs, the team has unified under one name and one mission: a modern LIMS designed around how labs actually operate. Learn why the rebrand reflects a platform built for flexible workflows, FAIR data, and the AI transformation reshaping lab informatics.[Read More]

Semaphore is now Labbit. After more than a decade and 400,000 hours working inside complex regulated labs, the team has unified under one name and one mission: a modern LIMS designed around how labs actually operate. Learn why the rebrand reflects a platform built for flexible workflows, FAIR data, and the AI transformation reshaping lab informatics.[Read More]

Lab AI adoption is moving through three stages: passive ELNs that record work without supporting it, shadow labs where scientists lean on public generative AI and fragment the record, and active labs where governed intelligence lives inside the notebook itself. See the full maturity model and a practical roadmap to get from one to the next.[Read More]

Lab AI adoption is moving through three stages: passive ELNs that record work without supporting it, shadow labs where scientists lean on public generative AI and fragment the record, and active labs where governed intelligence lives inside the notebook itself. See the full maturity model and a practical roadmap to get from one to the next.[Read More]

Stop waiting for perfect data to transform your research. In this episode of Decoding the Digital Lab, Rob Brown of Sapio Sciences pulls back the curtain on how leading labs are moving past AI hype and into real results, including 90% fewer physical compounds and 3x faster discovery phases. Tune in to bridge the gap between AI strategy and lab reality.[Read More]

Stop waiting for perfect data to transform your research. In this episode of Decoding the Digital Lab, Rob Brown of Sapio Sciences pulls back the curtain on how leading labs are moving past AI hype and into real results, including 90% fewer physical compounds and 3x faster discovery phases. Tune in to bridge the gap between AI strategy and lab reality.[Read More]

Modern labs do not just need more throughput, they need a structured, reliable data foundation behind every scientific decision. See how LabWare redefines LIMS as a strategic asset through configurable workflows, standardized data models, automated instrument capture, and built-in governance that keeps data trustworthy and analysis-ready.[Read More]

Modern labs do not just need more throughput, they need a structured, reliable data foundation behind every scientific decision. See how LabWare redefines LIMS as a strategic asset through configurable workflows, standardized data models, automated instrument capture, and built-in governance that keeps data trustworthy and analysis-ready.[Read More]

A GxP-validated LIMS is not enough on its own. Auditors scrutinize how labs calibrate equipment, validate methods, investigate deviations, approve changes, and trace reagents end to end, and many of those workflows still live in paper, spreadsheets, and email. With FDA 21 CFR 211.68(b) citations up 55% since 2022, here is how to close the gap.[Read More]

A GxP-validated LIMS is not enough on its own. Auditors scrutinize how labs calibrate equipment, validate methods, investigate deviations, approve changes, and trace reagents end to end, and many of those workflows still live in paper, spreadsheets, and email. With FDA 21 CFR 211.68(b) citations up 55% since 2022, here is how to close the gap.[Read More]

Under ICH E6(R3), the sponsor owns the integrity of all data, including vendor data, and misalignment can mean fines, production halts, or rejection of clinical data. This white paper is a strategic roadmap for moving your QMS and MES from one-size-fits-all validation to the risk-based, proportional model now expected by the FDA and Health Canada.[Read More]

Under ICH E6(R3), the sponsor owns the integrity of all data, including vendor data, and misalignment can mean fines, production halts, or rejection of clinical data. This white paper is a strategic roadmap for moving your QMS and MES from one-size-fits-all validation to the risk-based, proportional model now expected by the FDA and Health Canada.[Read More]

With an estimated 20,000 to 25,000 unfilled laboratory positions across the U.S. and Canada, the staffing crisis is structural, not temporary. The smartest labs in 2026 are shifting their strategy from hiring alone to automating manual workflows, digitizing institutional knowledge, and using LIMS and ELN tools to recover 20-30% of operational capacity.[Read More]

With an estimated 20,000 to 25,000 unfilled laboratory positions across the U.S. and Canada, the staffing crisis is structural, not temporary. The smartest labs in 2026 are shifting their strategy from hiring alone to automating manual workflows, digitizing institutional knowledge, and using LIMS and ELN tools to recover 20-30% of operational capacity.[Read More]

Kalleid will present at Certainty US 2026 on April 14-15 in Boston, MA. Principal Scientific Application Manager Jonathan Buttrick will discuss improving data quality after registration system migrations, sharing lessons from migrating multiple regional systems into one global Chemaxon Compound Registration deployment at Takeda Pharmaceuticals.[Read More]

Kalleid will present at Certainty US 2026 on April 14-15 in Boston, MA. Principal Scientific Application Manager Jonathan Buttrick will discuss improving data quality after registration system migrations, sharing lessons from migrating multiple regional systems into one global Chemaxon Compound Registration deployment at Takeda Pharmaceuticals.[Read More]

The LabLynx ELN Suite gives academic research groups a full-featured electronic lab notebook at no cost with unlimited users. Whether your team works in life sciences, engineering, environmental research, or teaching labs, the Community Edition is built to support your research and your students through a true academic partnership, not a vendor relationship.[Read More]

The LabLynx ELN Suite gives academic research groups a full-featured electronic lab notebook at no cost with unlimited users. Whether your team works in life sciences, engineering, environmental research, or teaching labs, the Community Edition is built to support your research and your students through a true academic partnership, not a vendor relationship.[Read More]

Setting up a new lab? The digital foundation matters as much as the physical one. This article explores how implementing LIMS, ELN, and SDMS from day one helps map research workflows, embed data integrity through ALCOA+ principles, build in regulatory compliance, and enable end-to-end traceability through system integration.[Read More]

Setting up a new lab? The digital foundation matters as much as the physical one. This article explores how implementing LIMS, ELN, and SDMS from day one helps map research workflows, embed data integrity through ALCOA+ principles, build in regulatory compliance, and enable end-to-end traceability through system integration.[Read More]

Nearly all lab professionals (97%) now use some form of AI, and 77% turn to public tools like ChatGPT alongside their ELN. The problem? 45% do so through personal accounts, pulling scientific reasoning outside governed workflows. This article explores why labs need context-aware AI inside the notebook, not a crackdown.[Read More]

Nearly all lab professionals (97%) now use some form of AI, and 77% turn to public tools like ChatGPT alongside their ELN. The problem? 45% do so through personal accounts, pulling scientific reasoning outside governed workflows. This article explores why labs need context-aware AI inside the notebook, not a crackdown.[Read More]

Traditional publishing pushes static content to users, but CaaS flips the model by pulling and assembling content on demand. By linking repositories through APIs and delivering only what's relevant, CaaS enables omni-channel customization, reduces storage burden, and accelerates delivery. Learn how CaaS is reshaping technical communications.[Read More]

Traditional publishing pushes static content to users, but CaaS flips the model by pulling and assembling content on demand. By linking repositories through APIs and delivering only what's relevant, CaaS enables omni-channel customization, reduces storage burden, and accelerates delivery. Learn how CaaS is reshaping technical communications.[Read More]

Semaphore Solutions has officially rebranded as Labbit. After a decade of helping laboratories tackle complex informatics challenges, spanning hundreds of implementations and over 300,000 hours of work across clinical diagnostics, genomics, and GMP manufacturing, the company has shifted its full focus to the Labbit modern LIMS platform.[Read More]

Semaphore Solutions has officially rebranded as Labbit. After a decade of helping laboratories tackle complex informatics challenges, spanning hundreds of implementations and over 300,000 hours of work across clinical diagnostics, genomics, and GMP manufacturing, the company has shifted its full focus to the Labbit modern LIMS platform.[Read More]

New research from Sapio Sciences reveals that 65% of lab professionals report repeating experiments because prior results are too hard to find or reuse. While 81% say their ELN records data effectively, it falls short on interpretation. The findings highlight a growing need for next-generation notebooks that support reuse, context, and decision-making.[Read More]

New research from Sapio Sciences reveals that 65% of lab professionals report repeating experiments because prior results are too hard to find or reuse. While 81% say their ELN records data effectively, it falls short on interpretation. The findings highlight a growing need for next-generation notebooks that support reuse, context, and decision-making.[Read More]