Type a search term to find related articles by LIMS subject matter experts gathered from the most trusted and dynamic collaboration tools in the laboratory informatics industry.

Scientific citation is providing detailed reference in a scientific publication, typically a paper or book, to previous published (or occasionally private) communications which have a bearing on the subject of the new publication.[citation needed] The purpose of citations in original work is to allow readers of the paper to refer to cited work to assist them in judging the new work, source background information vital for future development, and acknowledge the contributions of earlier workers.[citation needed] Citations in, say, a review paper bring together many sources, often recent, in one place.

To a considerable extent the quality of work, in the absence of other criteria, is judged on the number of citations received, adjusting for the volume of work in the relevant topic.[citation needed] While this is not necessarily a reliable measure, counting citations is trivially easy; judging the merit of complex work can be very difficult.[citation needed]

Previous work may be cited regarding experimental procedures, apparatus, goals, previous theoretical results upon which the new work builds, theses, and so on. Typically such citations establish the general framework of influences and the mindset of research, and especially as "part of what science" it is, and to help determine who conducts the peer review.[citation needed]

In patent law the citation of previous works, or prior art, helps establish the uniqueness of the invention being described. The focus in this practice is to claim originality for commercial purposes, and so the author is motivated to avoid citing works that cast doubt on its originality. Thus this does not appear to be "scientific" citation. Inventors and lawyers have a legal obligation to cite all relevant art; not to do so risks invalidating the patent.[citation needed] The patent examiner is obliged to list all further prior art found in searches.[citation needed]

A digital object identifier (DOI) is a persistent identifier or handle used to uniquely identify various objects, standardized by the International Organization for Standardization (ISO).[1] DOIs are an implementation of the Handle System;[2][3] they also integrate with the URI system (Uniform Resource Identifier). They are widely used to identify academic, professional, and government information, such as journal articles, research reports, data sets, and official publications.

A DOI aims to resolve to its target, the information object to which the DOI refers. This is achieved by binding the DOI to metadata about the object, such as a URL where the object is located. Thus, by being actionable and interoperable, a DOI differs from ISBNs or ISRCs which are identifiers only. The DOI system uses the indecs Content Model to represent metadata.Citation analysis is a method widely used in metascience:

Citation analysis is the examination of the frequency, patterns, and graphs of citations in documents. It uses the directed graph of citations — links from one document to another document — to reveal properties of the documents. A typical aim would be to identify the most important documents in a collection. A classic example is that of the citations between academic articles and books.[4][5] For another example, judges of law support their judgements by referring back to judgements made in earlier cases (see citation analysis in a legal context). An additional example is provided by patents which contain prior art, citation of earlier patents relevant to the current claim. The digitization of patent data and increasing computing power have led to a community of practice that uses these citation data to measure innovation attributes, trace knowledge flows, and map innovation networks.[6]

Documents can be associated with many other features in addition to citations, such as authors, publishers, journals as well as their actual texts. The general analysis of collections of documents is known as bibliometrics and citation analysis is a key part of that field. For example, bibliographic coupling and co-citation are association measures based on citation analysis (shared citations or shared references). The citations in a collection of documents can also be represented in forms such as a citation graph, as pointed out by Derek J. de Solla Price in his 1965 article "Networks of Scientific Papers".[7] This means that citation analysis draws on aspects of social network analysis and network science.

An early example of automated citation indexing was CiteSeer, which was used for citations between academic papers, while Web of Science is an example of a modern system which includes more than just academic books and articles reflecting a wider range of information sources. Today, automated citation indexing[8] has changed the nature of citation analysis research, allowing millions of citations to be analyzed for large-scale patterns and knowledge discovery. Citation analysis tools can be used to compute various impact measures for scholars based on data from citation indices.[9][10][note 1] These have various applications, from the identification of expert referees to review papers and grant proposals, to providing transparent data in support of academic merit review, tenure, and promotion decisions. This competition for limited resources may lead to ethically questionable behavior to increase citations.[11][12]

A great deal of criticism has been made of the practice of naively using citation analyses to compare the impact of different scholarly articles without taking into account other factors which may affect citation patterns.[13] Among these criticisms, a recurrent one focuses on "field-dependent factors", which refers to the fact that citation practices vary from one area of science to another, and even between fields of research within a discipline.[14]Modern scientists are sometimes judged by the number of times their work is cited by others—this is actually a key indicator of the relative importance of a work in science. Accordingly, individual scientists are motivated to have their own work cited early and often and as widely as possible, but all other scientists are motivated to eliminate unnecessary citations so as not to devalue this means of judgment[15].[citation needed] A formal citation index tracks which referred and reviewed papers have referred which other such papers. Baruch Lev and other advocates of accounting reform consider the number of times a patent is cited to be a significant metric of its quality, and thus of innovation.[citation needed] Reviews often replace citations to primary studies.[16]

Citation-frequency is one indicator used in scientometrics.

Some studies explore citations and citation-frequencies. Researchers found that papers in leading journals with findings that can not be replicated tend to be cited more than reproducible science. Results that are published unreproducibly – or not in a replicable sufficiently transparent way – are more likely to be wrong, may slow progress and, according to an author, "a simple way to check how often studies have been repeated, and whether or not the original findings are confirmed" is needed. The authors also put forward possible explanations for this state of affairs.[17][18]

Two metascientists reported that in a growing scientific field, citations disproportionately cite already well-cited papers, possibly slowing and inhibiting canonical progress to some degree in some cases. They find that "structures fostering disruptive scholarship and focusing attention on novel ideas" could be important.[20][21][22]

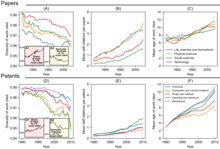

Other metascientists introduced the 'CD index' intended to characterize "how papers and patents change networks of citations in science and technology" and reported that it has declined, which they interpreted as "slowing rates of disruption". They proposed linking this to changes to three "use of previous knowledge"-indicators which they interpreted as "contemporary discovery and invention" being informed by "a narrower scope of existing knowledge". The overall number of papers has risen while the total of "highly disruptive" papers has not. The 1998 discovery of the accelerating expansion of the universe has a CD index of 0. Their results also suggest scientists and inventors "may be struggling to keep up with the pace of knowledge expansion".[23][21][19]

Recommendation systems sometimes also use citations to find similar studies to the one the user is currently reading or that the user may be interested in and may find useful.[25] Better availability of integrable open citation information could be useful in addressing the "overwhelming amount of scientific literature".[24]

Knowledge agents may use citations to find studies that are relevant to the user's query, in particular citation statements are used by scite.ai to answer a question, also providing the associated reference(s).[26][additional citation(s) needed]

There also has been analysis of citations of science information on Wikipedia or of scientific citations on the site, e.g. enabling listing the most relevant or most-cited scientific journals and categories and dominant domains.[27] Since 2015, the altmetrics platform Altmetric.com also shows citing English Wikipedia articles for a given study, later adding other language editions.[27][28] The Wikimedia platform under development Scholia also shows "Wikipedia mentions" of scientific works.[29] A study suggests a citation on Wikipedia "could be considered a public parallel to scholarly citation".[30] A scientific publication being "cited in a Wikipedia article is considered an indicator of some form of impact for this publication" and it may be possible to detect certain publications through changes to Wikipedia articles.[31] Wikimedia Research's Cite-o-Meter tool showed a league table of which academic publishers are most cited on Wikipedia[30] as does a page by the "Academic Journals WikiProject".[32][33][circular reference][additional citation(s) needed] Research indicates a large share of academic citations on the platform are paywalled and hence inaccessible to many readers.[34][35] "[citation needed]" is a tag added by Wikipedia editors to unsourced statements in articles requesting citations to be added.[36] The phrase is reflective of the policies of verifiability and no original research on Wikipedia and has become a general Internet meme.[37]

The tool scite.ai tracks and links citations of papers as 'Supporting', 'Mentioning' or 'Contrasting' the study, differentiating between these contexts of citations to some degree which may be useful for evaluation/metrics and e.g. discovering studies or statements contrasting statements within a specific study.[39][40][41]

The Scite Reference Check bot is an extension of scite.ai that scans new article PDFs "for references to retracted papers, and posts both the citing and retracted papers on Twitter" and also "flags when new studies cite older ones that have issued corrections, errata, withdrawals, or expressions of concern".[41] Studies have suggested as few as 4% of citations to retracted papers clearly recognize the retraction.[41] Research found "that authors tend to keep citing retracted papers long after they have been red flagged, although at a lower rate".[42]